Confusion matrix

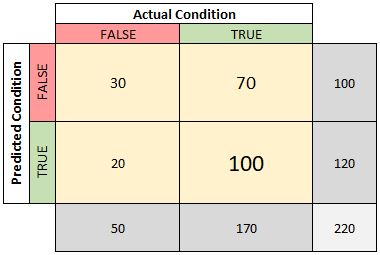

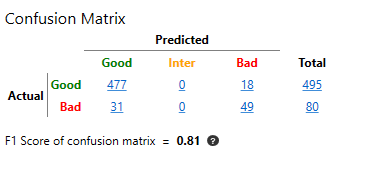

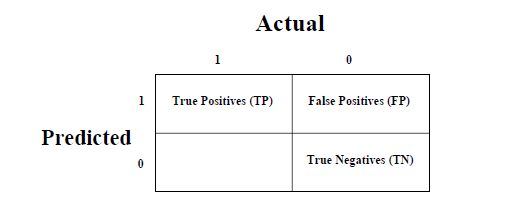

They allow you to visualize data and statistics quickly to analyze model performance and identify trends that may help change specific settings. The advantage of these matrices is that they are very simple to read and understand. These results can be the correct indication of a positive prediction as "true positive" and a negative prediction as "true negative", or an incorrect positive prediction as "false positive" and an incorrect negative prediction as "false negative". Predictions or results are entered in the rows under the actual classes. Each row of the table corresponds to a predicted class, and each column corresponds to a real class. The matrix indicates the number of correct and incorrect predictions for each class, which are organized according to the expected results and predictions. A prediction is then made for each line of the "test dataset". To compute a confusion matrix, it is necessary to have a set of test data (test dataset) or a set of validation data (validation dataset) along with the expected result values. This matrix offers the opportunity to analyze errors in statistics, data mining, and even certain medical examinations. In general, it speeds up the analysis of statistical data and makes the results easier to decipher via data visualization.

In addition to machine learning, the confusion matrix is used in the fields of statistics, data mining, and artificial intelligence.

It helps to find out what errors are being made and to determine their exact type. This matrix helps to understand how the classification model is not optimized while making predictions. The results are then compared with the actual values. Correct and incorrect predictions are highlighted and divided by class. It is a summary of the results of predictions on a classification problem. How many numbers after the decimal point should be printed, only relevant for relative confusion matrices.Also known as an error matrix, a confusion matrix is a two dimensional matrix that allows visualization of the algorithm’s performance. If TRUE both the absolute and relative confusion matrices are printed. Possible values are “train”, “test”, or “both”. Resampling, otherwise an error is thrown.ĭefaults to “both”. If set equals train or test, the pred object must be the result of a Specifies which part(s) of the data are used for the calculation.

If TRUE add absolute number of observations in each group. If TRUE two additional matrices are calculated. calculateConfusionMatrix ( pred, relative = FALSE, sums = FALSE, set = "both" ) # S3 method for ConfusionMatrix print ( x, both = TRUE, digits = 2. This probably mainly makes sense when cross-validation is used for resampling. y, as if both were computed onĪ single test set. Note that for resampling no further aggregation is currently performed.Īll predictions on all test sets are joined to a vector yhat, as are all labels The print function returns the relative matrices inĪ compact way so that both row and column marginals can be seen in one matrix. If FALSE we only compute the absolute value matrix. The relative confusion matrices are normalized based on rows and columns respectively, If relative = TRUE we compute three matrices, one with absolute values and two with relative.

The last bottom right element displays the total amount of errors.Ī list is returned that contains multiple matrices. When you condition on the corresponding true (rows) or predicted (columns) class. The marginal elements count the number ofĬlassification errors for the respective row or column, i.e., the number of errors Rows indicate true classes, columns predicted classes. Calculates the confusion matrix for a (possibly resampled) prediction.